Trying auto-research with Anthropic's performance engineering take-home test

Anthropic posted a blog on Designing AI-resistant technical evaluations in the late January, 2026. They also released the latest version of their take-home test for performance engineering on Github. The test is about optimizing a certain task on a given abstract machine. Andrej Karpathy released a project called autoresearch a few days ago, where he let AI agents run research on single-GPU nanochat training. I saw some similarities between the two projects and thought it would be a good idea to try the autoresearch with the Anthropic’s take-home test.

I am not going to cover the details of the take-home test here, you can read the blog post above for more information. To summarize, there is one metric to optimize: cycles, the lower the better.

Here are the results posted on Anthropic’s Github repo:

- 2164 cycles: Claude Opus 4 after many hours in the test-time compute harness

- 1790 cycles: Claude Opus 4.5 in a casual Claude Code session, approximately matching the best human performance in 2 hours

- 1579 cycles: Claude Opus 4.5 after 2 hours in our test-time compute harness

- 1548 cycles: Claude Sonnet 4.5 after many more than 2 hours of test-time compute

- 1487 cycles: Claude Opus 4.5 after 11.5 hours in the harness

- 1363 cycles: Claude Opus 4.5 in an improved test time compute harness

- ??? cycles: Best human performance ever is substantially better than the above, but we won’t say how much.

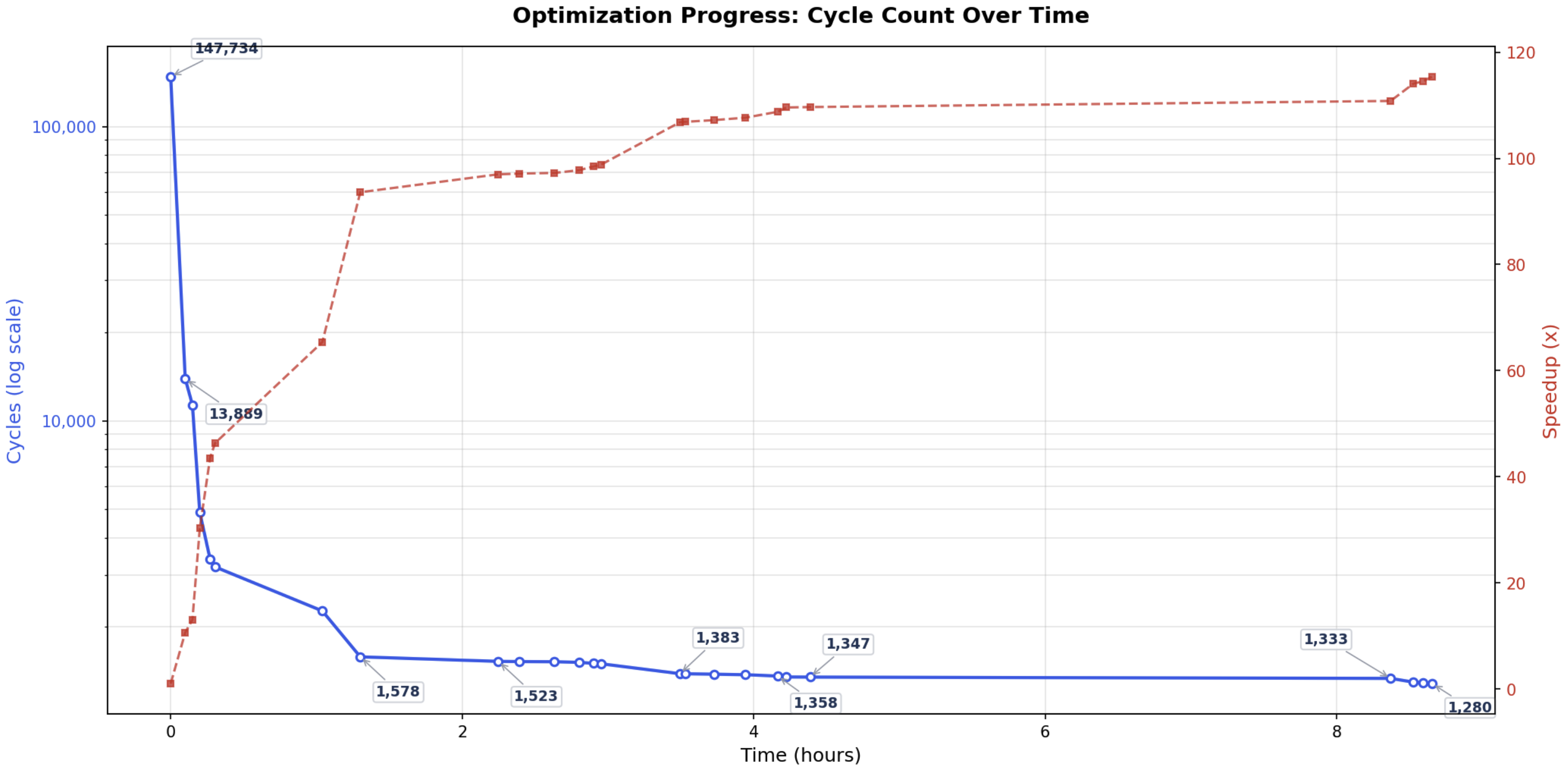

Here are the results I got with a single Codex session with GPT-5.4-xhigh (before the first 5 hours, I paused for ~1 hour and tried Claude Code with Opus 4.6 at the end):

Some key observations from the results:

- GPT-5.4 can achieve 1523 cycles in around 2 hours, in a single session without any test-time compute harness. This is better than the best human performance in 2 hours (1790 cycles), and Claude Sonnet 4.5 after many more than 2 hours of test-time compute harness (1548 cycles).

- GPT-5.4 can beat the best-known Opus 4.5 results (1347 cycles vs 1363 cycles) with much less time and computational resources.

During the whole process, I wrote like 5 short natural language sentences. I first copied the program.md file from autoresearch repo and then asked Claude Code to adjust it for the take-home test. I proofread the file for one pass while I asked Claude Code to proofread it too with /ultrathink. Next, I copied this sentence from autoresearch repo and let Codex do the work:

> Hi have a look at program.md and let's kick off a new experiment! let's do the setup first.

I was expecting that’s all I need to do, but Codex will occasionally end turn early even after I told it not to stop until it can hit below 1000 cycles. Thus I manually checked if it was still running once in a while and just typed “keep going” to let it continue.

After it hit 1347 cycles, I hoped that it might even beat the best know human performance (??? cycles) but it didn’t make further progress for about one hour. I then stopped the session to avoid burning more tokens. After a while, I tried Claude Code with Opus 4.6 to see if it can make improvements and it did further progress to 1280 quickly, but then it seemed stuck around there for another hour and I stopped the experiment.

It’s crazy to see how quickly the LLMs are evolving. I am both disappointed and happy to see that they cannot beat the best human performance yet.